Using NVIDIA A100’s Multi-Instance GPU to Run Multiple Workloads in Parallel on a Single GPU

Aug 26, 2021 in PSAP | blog-postToday, my work on benchmarking NVIDIA A100 Multi-Instance GPUs running multiple AI/ML workloads in parallel has been published on OpenShift blog:

- Using NVIDIA A100’s Multi-Instance GPU to Run Multiple Workloads in Parallel on a Single GPU

- PDF archive

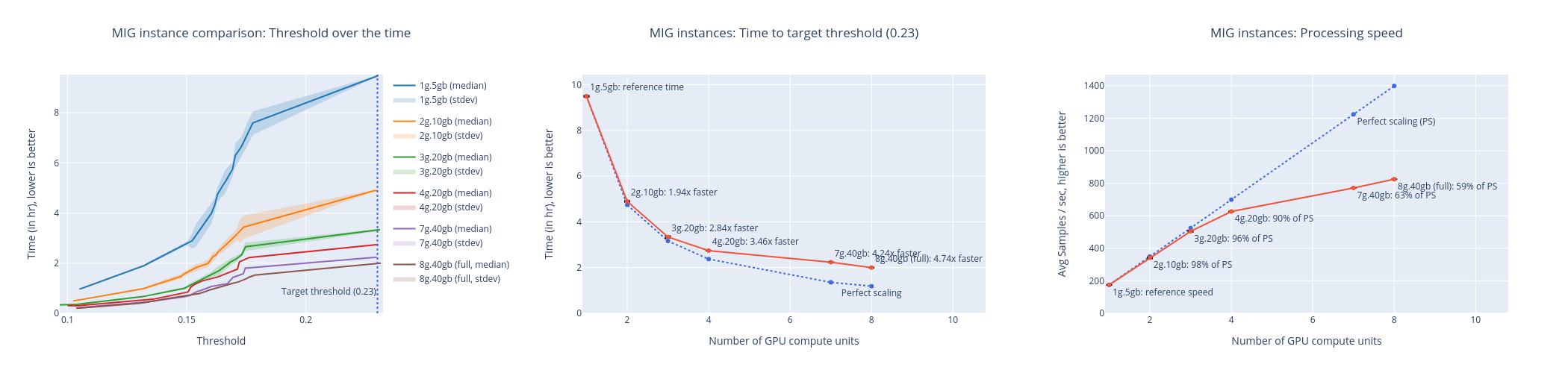

The new Multi-Instance GPU (MIG) feature lets GPUs based on the NVIDIA Ampere architecture run multiple GPU-accelerated CUDA applications in parallel in a fully isolated way. The compute units of the GPU, as well as its memory, can be partitioned into multiple MIG instances. Each of these instances presents as a stand-alone GPU device from the system perspective and can be bound to any application, container, or virtual machine running on the node. At the hardware-level, the MIG instance has its own dedicated resources (compute, cache, memory), so the workload running in one instance does not affect what is running on the other ones.

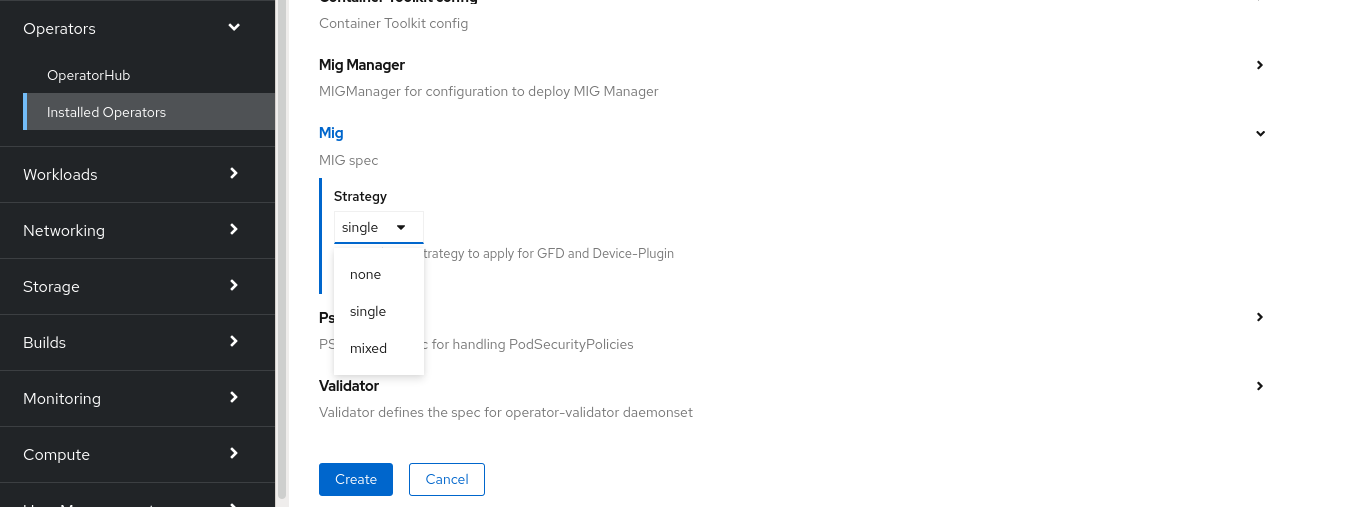

In collaboration with NVIDIA, we extended the GPU Operator to give OpenShift users the ability to dynamically reconfigure the geometry of the MIG partitioning. The geometry of the MIG partitioning is the way hardware resources are bound to MIG instances, so it directly influences their performance and the number of instances that can be allocated. The A100-40GB, which we used for this benchmark, has eight compute units and 40 GB of RAM. When the MIG mode is enabled, the eighth instance is reserved for resource management.